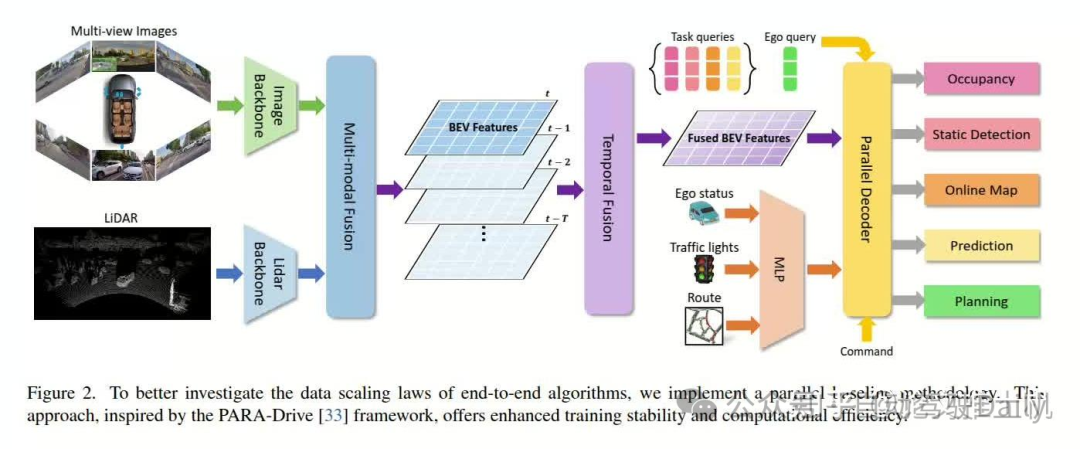

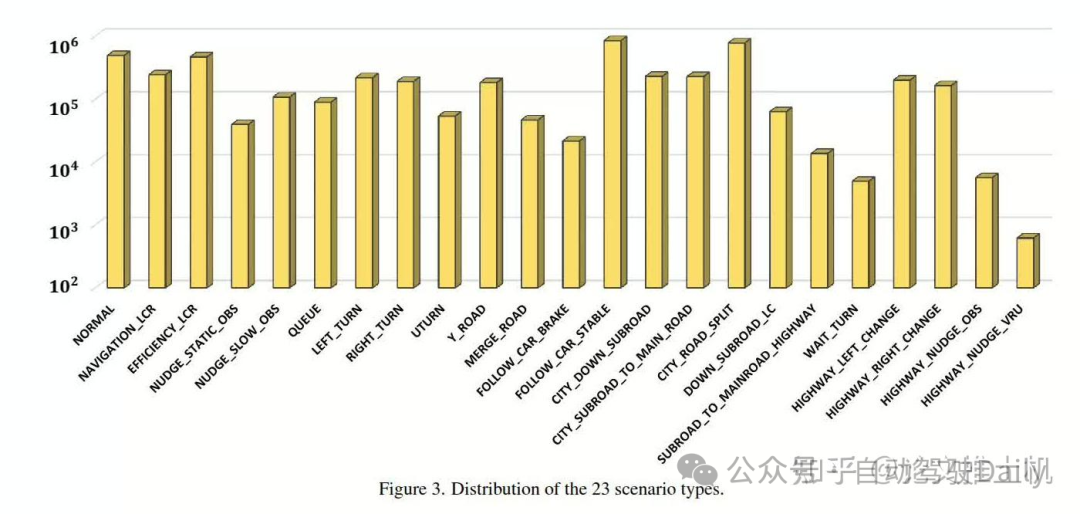

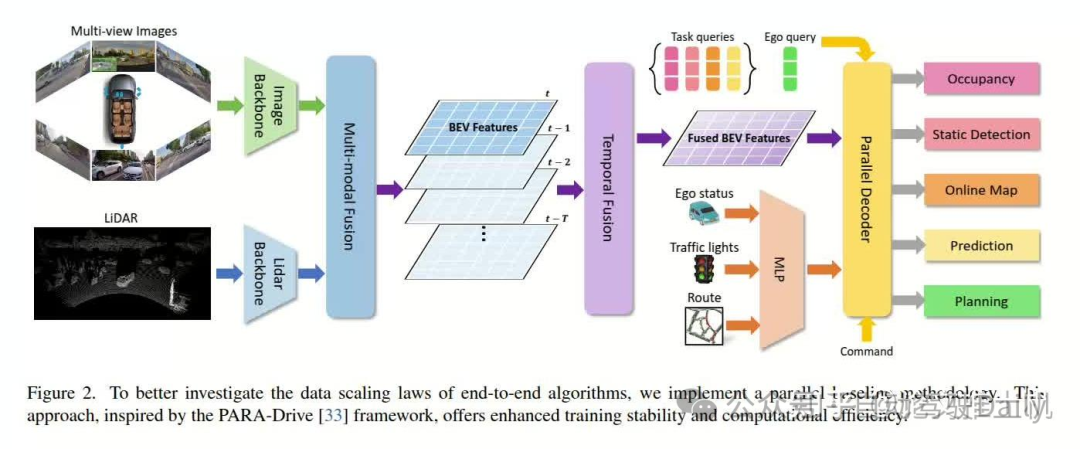

Zheng, Y., Xia, Z., Zhang, Q., Zhang, T., Lu, B., Huo, X., ... & Zhao, D. (2024). Preliminary Investigation into Data Scaling Laws for Imitation Learning-Based End-to-End Autonomous Driving.arXiv preprint arXiv:2412.02689. 主要回答了三个问题:Is there a data scaling law in the field of end-to-end autonomous driving?How does data quantity influence model performance when scaling training data?Can data scaling endow autonomous driving cars with the generalization to new scenarios and actions?答案是:A power-law data scaling law in open-loop metric but different in closed-loop metric.Data distribution plays a key role in the data scaling law of end-to-end autonomous driving.The data scaling law endows the model with combinatorial generalization, which powers the model's zero-shot ability for new scenarios.通过实验证明,本文证明了log-log scaling law存在,数据分布对模型训练结果有影响。scaling law能够提升模型的泛化能力。文章的模型结构如下: 然后把数据分成了23类:

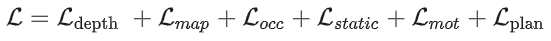

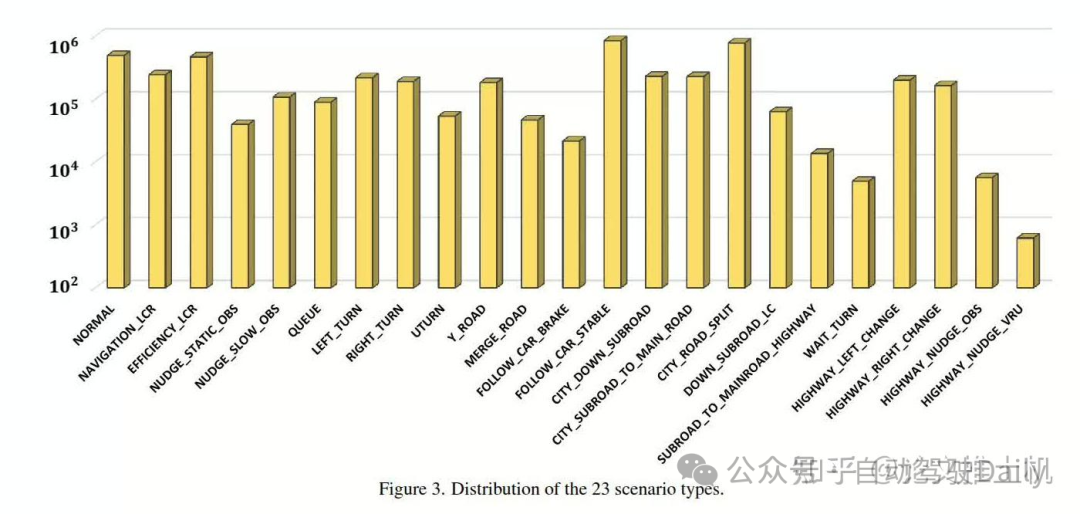

然后把数据分成了23类: 模型结构非常简约, 如图中所示,就不多说了。最后的loss是:

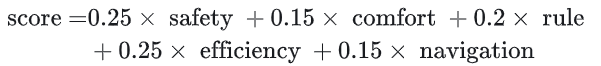

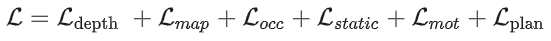

模型结构非常简约, 如图中所示,就不多说了。最后的loss是: planning的ego query用于预测自车trajectory:“Then the queries are used to predict multi-modal future planning trajectories with corresponding scores.”其中planniing score是:

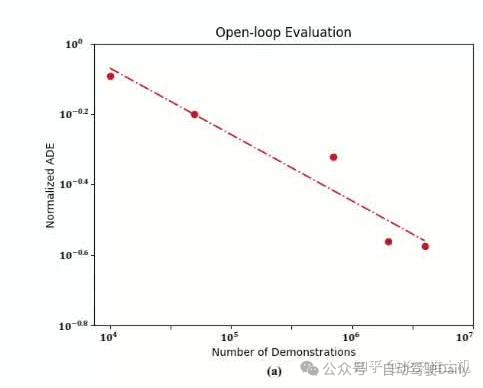

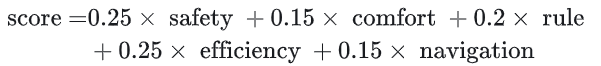

planning的ego query用于预测自车trajectory:“Then the queries are used to predict multi-modal future planning trajectories with corresponding scores.”其中planniing score是: 主要看看实验结果。power law: 无论是开环测试还是闭环测试,都存在power law曲线,本文的线性曲线是xy轴都取了log的。从open loop的测试结果来看,随着数据增长,关系保持线性。

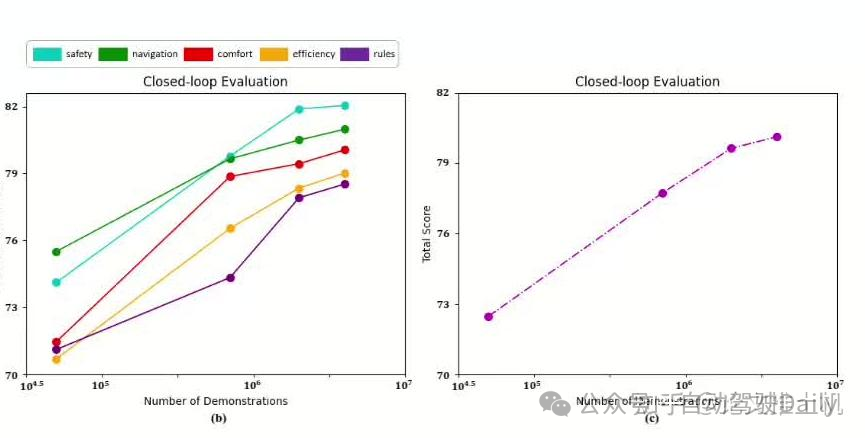

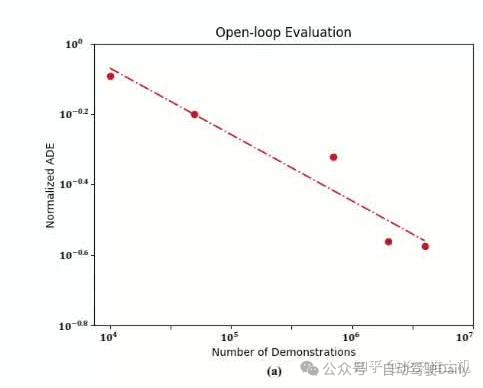

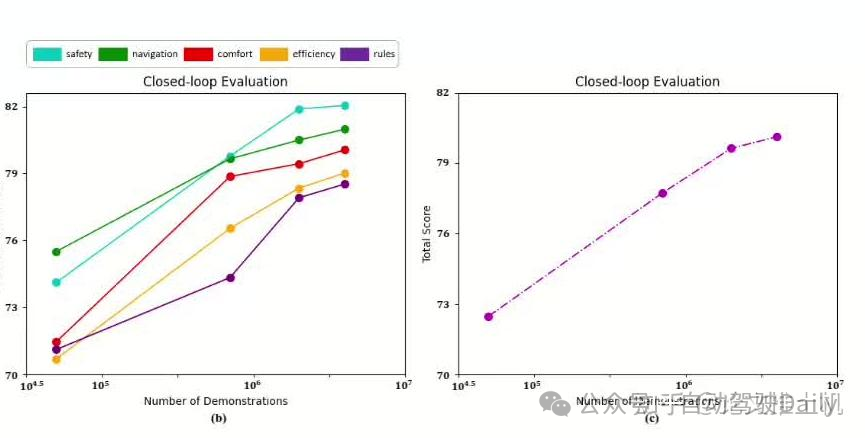

主要看看实验结果。power law: 无论是开环测试还是闭环测试,都存在power law曲线,本文的线性曲线是xy轴都取了log的。从open loop的测试结果来看,随着数据增长,关系保持线性。 但是在close loop测试中,线性关系在2 million数据之后发生变化:

但是在close loop测试中,线性关系在2 million数据之后发生变化: 可以看到,各个指标得分虽然随数据增长score仍然在提升,但是到达某个阈值之后,线性曲线增长变慢:“about 2 million training demonstrations), the growth rate decelerates as data continues to expand”。然后看下data quality和系统表现之间的关系,这里挑出了两组场景DOWN SUBROAD LC and SUBROAD TO MAINROAD HIGHWAY作为测试场景,这两个场景的系统表现比较差,所以文章将他们作为测试集来看随着数据增加,系统表现在这两个场景下的表现是否发生变化:结论是有变化。随着数据增加,这两个场景的系统表现也在提升:“The results reveal that for these long-tailed scenarios, doubling the specific training data while keeping the total data volume constant led to improvements in planning performance”。当数据干到原来的四倍的时候,效果有点明显:“Furthermore, quadrupling the specific training data yielded even more substantial gains, with performance improvements between 22.8% and 32.9%.”然后再来看泛化能力,这里提出来两个场景的数据不用于训练,来看系统在没有见过的场景下的表现能力,为了能有更好的对比,我们再找出来一些类似的场景放在一起看看:

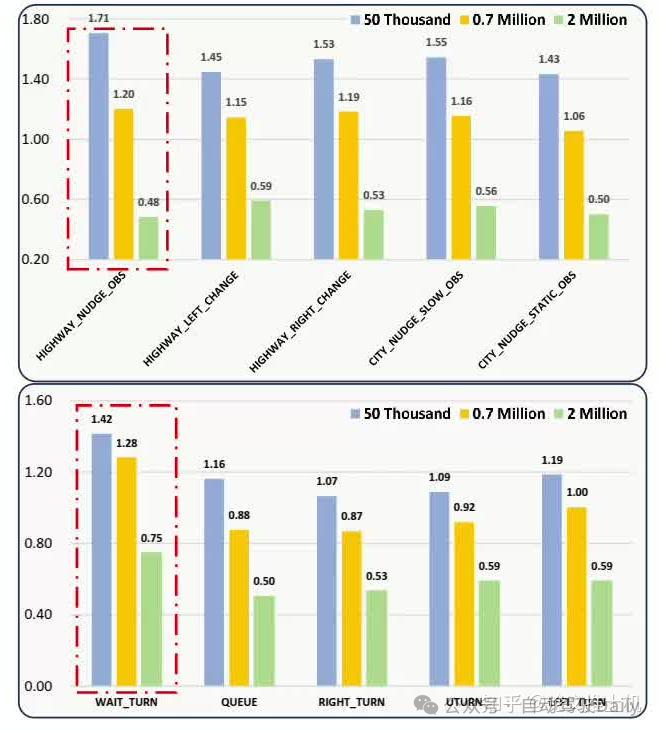

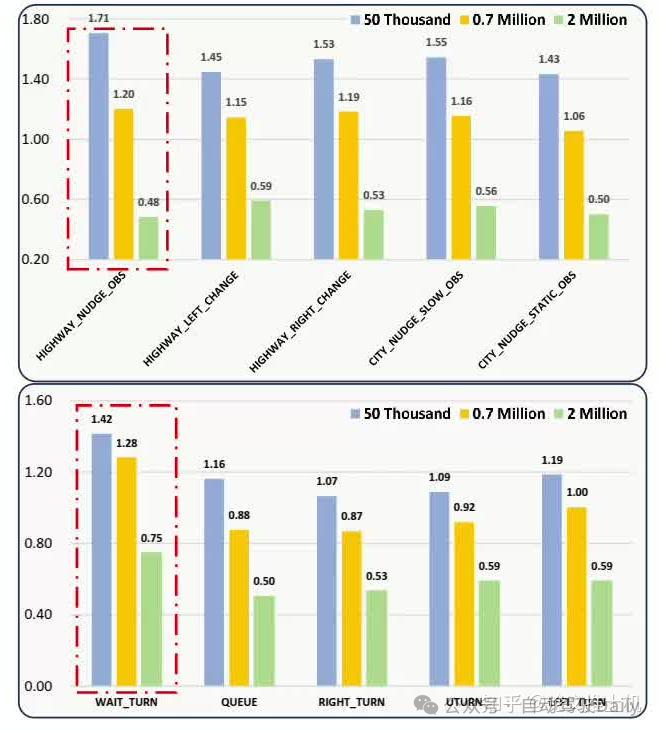

可以看到,各个指标得分虽然随数据增长score仍然在提升,但是到达某个阈值之后,线性曲线增长变慢:“about 2 million training demonstrations), the growth rate decelerates as data continues to expand”。然后看下data quality和系统表现之间的关系,这里挑出了两组场景DOWN SUBROAD LC and SUBROAD TO MAINROAD HIGHWAY作为测试场景,这两个场景的系统表现比较差,所以文章将他们作为测试集来看随着数据增加,系统表现在这两个场景下的表现是否发生变化:结论是有变化。随着数据增加,这两个场景的系统表现也在提升:“The results reveal that for these long-tailed scenarios, doubling the specific training data while keeping the total data volume constant led to improvements in planning performance”。当数据干到原来的四倍的时候,效果有点明显:“Furthermore, quadrupling the specific training data yielded even more substantial gains, with performance improvements between 22.8% and 32.9%.”然后再来看泛化能力,这里提出来两个场景的数据不用于训练,来看系统在没有见过的场景下的表现能力,为了能有更好的对比,我们再找出来一些类似的场景放在一起看看: 可以看到,在没有见过的数据集上面,所致额数据提升,系统表现也在提升,在类似的场景的数据集上系统表现的变化也是和数据相关的。然后还有一个有趣的现象,文章还发现,通过某些单一场景的训练,系统还学到了额外的泛化能力,他能在单一场景的组合表达的场景中提升系统表现:“By learning high-speed driving and low-speed nudging obstacles separately from the training data, the model acquired the ability to generalize to high-speed HIGHWAY NUDGE OBS scenarios; through learning to turn and queuing at red lights, the model developed the capability to generalize to WAIT TURN scenarios”。但是呢,其实这里的结论还是存在一定前提得,网络的设计和任务的定义也会在很大程度上影响着数据对网络的提升效果,如果网络设计不合理,或者参数量有限,能够存储的信息也就有限,所以也不一定能学到特别好的泛化能力。

可以看到,在没有见过的数据集上面,所致额数据提升,系统表现也在提升,在类似的场景的数据集上系统表现的变化也是和数据相关的。然后还有一个有趣的现象,文章还发现,通过某些单一场景的训练,系统还学到了额外的泛化能力,他能在单一场景的组合表达的场景中提升系统表现:“By learning high-speed driving and low-speed nudging obstacles separately from the training data, the model acquired the ability to generalize to high-speed HIGHWAY NUDGE OBS scenarios; through learning to turn and queuing at red lights, the model developed the capability to generalize to WAIT TURN scenarios”。但是呢,其实这里的结论还是存在一定前提得,网络的设计和任务的定义也会在很大程度上影响着数据对网络的提升效果,如果网络设计不合理,或者参数量有限,能够存储的信息也就有限,所以也不一定能学到特别好的泛化能力。

然后把数据分成了23类:

然后把数据分成了23类: 模型结构非常简约, 如图中所示,就不多说了。最后的loss是:

模型结构非常简约, 如图中所示,就不多说了。最后的loss是: planning的ego query用于预测自车trajectory:“Then the queries are used to predict multi-modal future planning trajectories with corresponding scores.”其中planniing score是:

planning的ego query用于预测自车trajectory:“Then the queries are used to predict multi-modal future planning trajectories with corresponding scores.”其中planniing score是: 主要看看实验结果。power law: 无论是开环测试还是闭环测试,都存在power law曲线,本文的线性曲线是xy轴都取了log的。从open loop的测试结果来看,随着数据增长,关系保持线性。

主要看看实验结果。power law: 无论是开环测试还是闭环测试,都存在power law曲线,本文的线性曲线是xy轴都取了log的。从open loop的测试结果来看,随着数据增长,关系保持线性。 但是在close loop测试中,线性关系在2 million数据之后发生变化:

但是在close loop测试中,线性关系在2 million数据之后发生变化: 可以看到,各个指标得分虽然随数据增长score仍然在提升,但是到达某个阈值之后,线性曲线增长变慢:“about 2 million training demonstrations), the growth rate decelerates as data continues to expand”。然后看下data quality和系统表现之间的关系,这里挑出了两组场景DOWN SUBROAD LC and SUBROAD TO MAINROAD HIGHWAY作为测试场景,这两个场景的系统表现比较差,所以文章将他们作为测试集来看随着数据增加,系统表现在这两个场景下的表现是否发生变化:结论是有变化。随着数据增加,这两个场景的系统表现也在提升:“The results reveal that for these long-tailed scenarios, doubling the specific training data while keeping the total data volume constant led to improvements in planning performance”。当数据干到原来的四倍的时候,效果有点明显:“Furthermore, quadrupling the specific training data yielded even more substantial gains, with performance improvements between 22.8% and 32.9%.”然后再来看泛化能力,这里提出来两个场景的数据不用于训练,来看系统在没有见过的场景下的表现能力,为了能有更好的对比,我们再找出来一些类似的场景放在一起看看:

可以看到,各个指标得分虽然随数据增长score仍然在提升,但是到达某个阈值之后,线性曲线增长变慢:“about 2 million training demonstrations), the growth rate decelerates as data continues to expand”。然后看下data quality和系统表现之间的关系,这里挑出了两组场景DOWN SUBROAD LC and SUBROAD TO MAINROAD HIGHWAY作为测试场景,这两个场景的系统表现比较差,所以文章将他们作为测试集来看随着数据增加,系统表现在这两个场景下的表现是否发生变化:结论是有变化。随着数据增加,这两个场景的系统表现也在提升:“The results reveal that for these long-tailed scenarios, doubling the specific training data while keeping the total data volume constant led to improvements in planning performance”。当数据干到原来的四倍的时候,效果有点明显:“Furthermore, quadrupling the specific training data yielded even more substantial gains, with performance improvements between 22.8% and 32.9%.”然后再来看泛化能力,这里提出来两个场景的数据不用于训练,来看系统在没有见过的场景下的表现能力,为了能有更好的对比,我们再找出来一些类似的场景放在一起看看: 可以看到,在没有见过的数据集上面,所致额数据提升,系统表现也在提升,在类似的场景的数据集上系统表现的变化也是和数据相关的。然后还有一个有趣的现象,文章还发现,通过某些单一场景的训练,系统还学到了额外的泛化能力,他能在单一场景的组合表达的场景中提升系统表现:“By learning high-speed driving and low-speed nudging obstacles separately from the training data, the model acquired the ability to generalize to high-speed HIGHWAY NUDGE OBS scenarios; through learning to turn and queuing at red lights, the model developed the capability to generalize to WAIT TURN scenarios”。但是呢,其实这里的结论还是存在一定前提得,网络的设计和任务的定义也会在很大程度上影响着数据对网络的提升效果,如果网络设计不合理,或者参数量有限,能够存储的信息也就有限,所以也不一定能学到特别好的泛化能力。

可以看到,在没有见过的数据集上面,所致额数据提升,系统表现也在提升,在类似的场景的数据集上系统表现的变化也是和数据相关的。然后还有一个有趣的现象,文章还发现,通过某些单一场景的训练,系统还学到了额外的泛化能力,他能在单一场景的组合表达的场景中提升系统表现:“By learning high-speed driving and low-speed nudging obstacles separately from the training data, the model acquired the ability to generalize to high-speed HIGHWAY NUDGE OBS scenarios; through learning to turn and queuing at red lights, the model developed the capability to generalize to WAIT TURN scenarios”。但是呢,其实这里的结论还是存在一定前提得,网络的设计和任务的定义也会在很大程度上影响着数据对网络的提升效果,如果网络设计不合理,或者参数量有限,能够存储的信息也就有限,所以也不一定能学到特别好的泛化能力。

文章转载自公众号: 自动驾驶Daily

原文链接:https://mp.weixin.qq.com/s/kagSaIpPEpXUwsNFBLCkOg