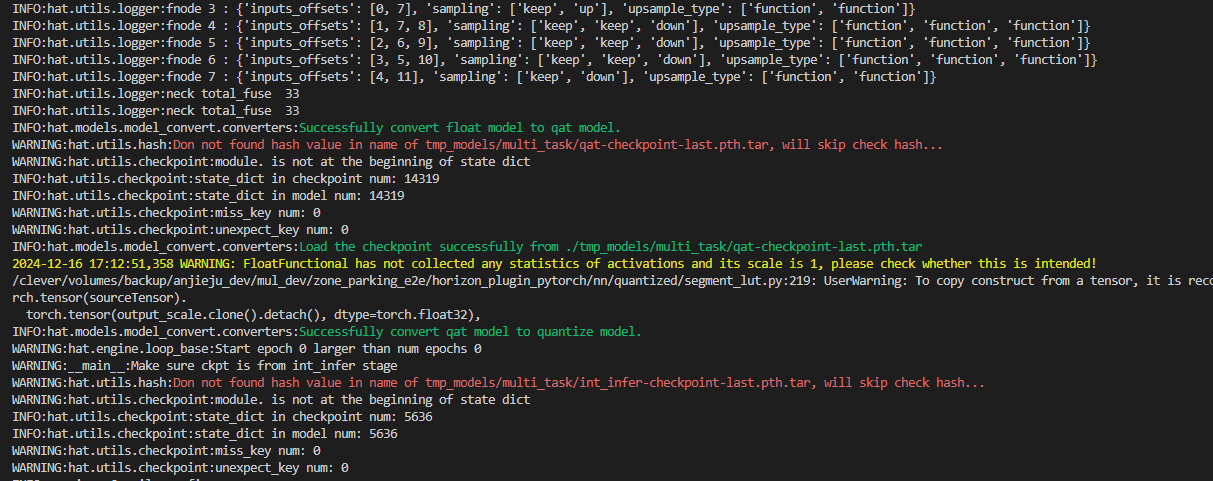

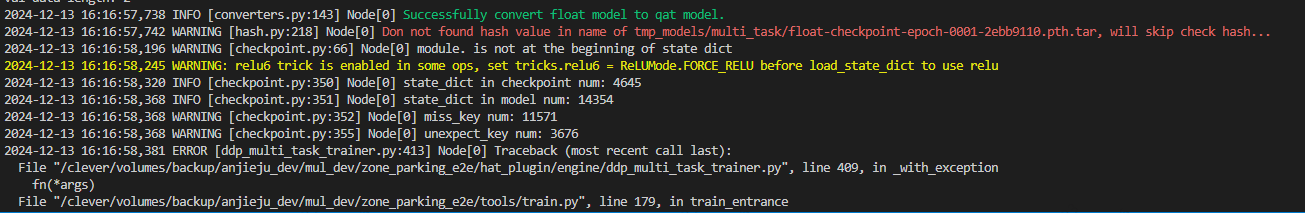

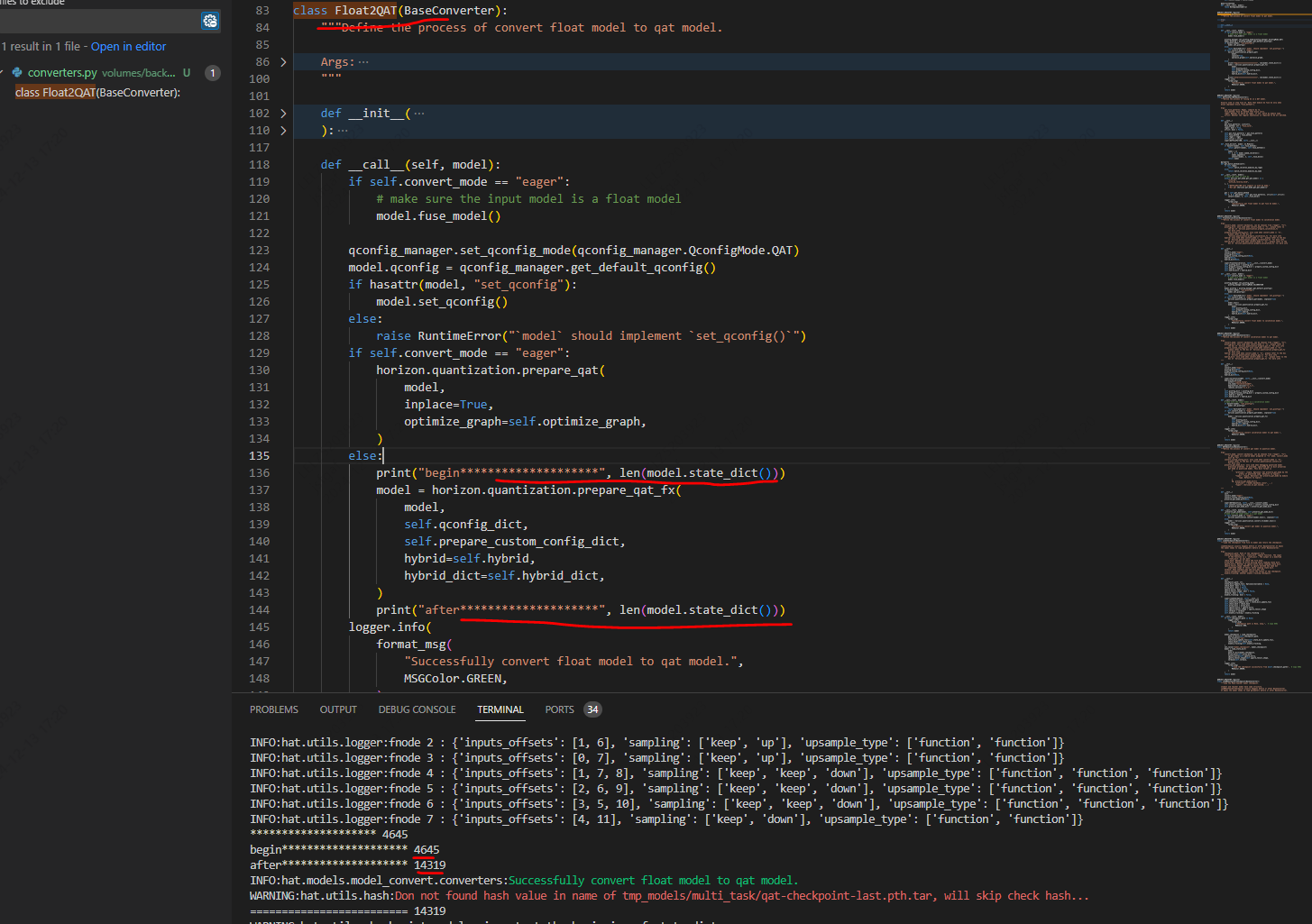

您好,我再compile_perf.py编译模型的时候遇到model中的key丢失问题,具体如附件中的附图所示,

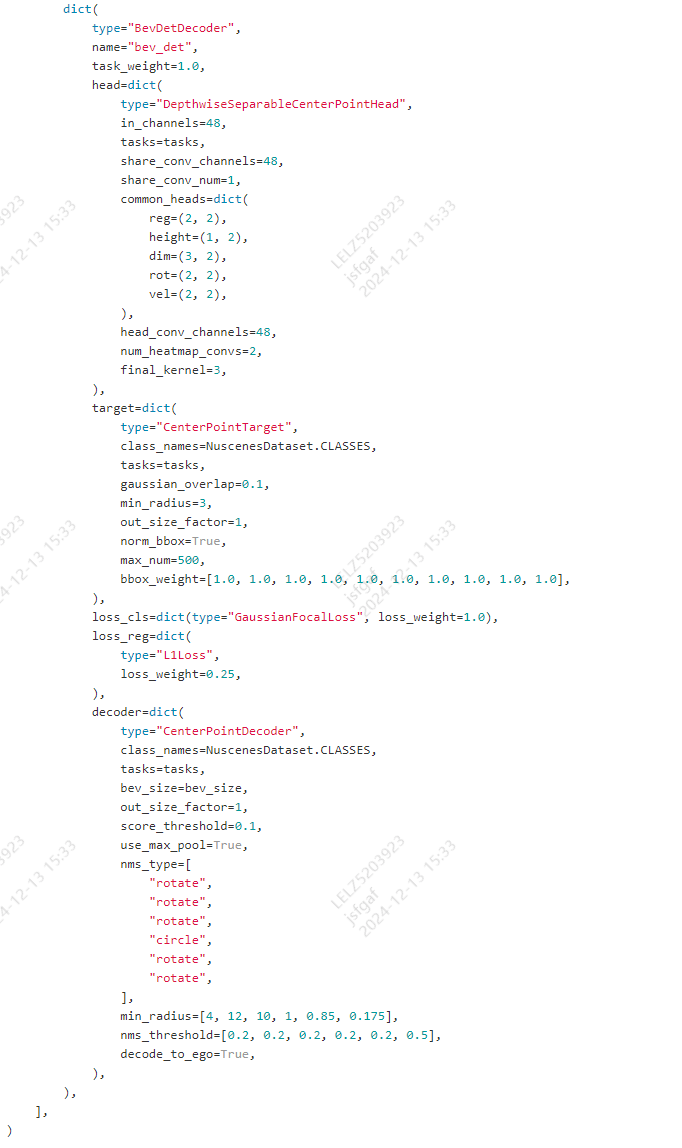

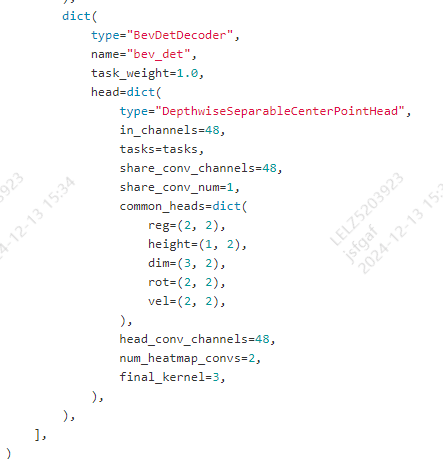

我的deploy model 和int_infer_trainer如下所示:

deploy_model = copy.deepcopy(model)

deploy_model["compile_model"] = True

deploy_model["decoders"][0]["losses"] = None

deploy_model["decoders"][0]["postprocess"] = None

deploy_model["decoders"][1]["target"] = None

deploy_model["decoders"][1]["loss"] = None

deploy_model["decoders"][1]["decoder"] = None

deploy_model["decoders"][2]["target"] = None

deploy_model["decoders"][2]["loss"] = None

deploy_model["decoders"][2]["decoder"] = None

deploy_model["decoders"][3]["target"] = None

deploy_model["decoders"][3]["loss_cls"] = None

deploy_model["decoders"][3]["loss_reg"] = None

deploy_model["decoders"][3]["decoder"] = None

int_infer_trainer = dict(

type="Trainer",

model=deploy_model,

model_convert_pipeline=dict(

type="ModelConvertPipeline",

qat_mode="fuse_bn",

converters=[

dict(type="Float2QAT", convert_mode=convert_mode),

dict(

type="LoadCheckpoint",

checkpoint_path="./tmp_models/multi_task/qat-checkpoint-epoch-0000-4bce8b75.pth.tar",

allow_miss=True,

ignore_extra=True,

),

dict(type="QAT2Quantize", convert_mode=convert_mode),

],

),

data_loader=None,

optimizer=None,

batch_processor=batch_processor,

num_epochs=0,

device=None,

callbacks=[ckpt_callback],

)

我在deploy model的时候把模型decode出目标和loss部分去掉了,这部分是没有参数的。我不明白附件中图的丢失情况是否正常,如果不正常丢失的是我置为None部分的参数吗?

但是那部分就是一些解码模型输出的python代码,并没有参数。我该如何解决这个问题呢?

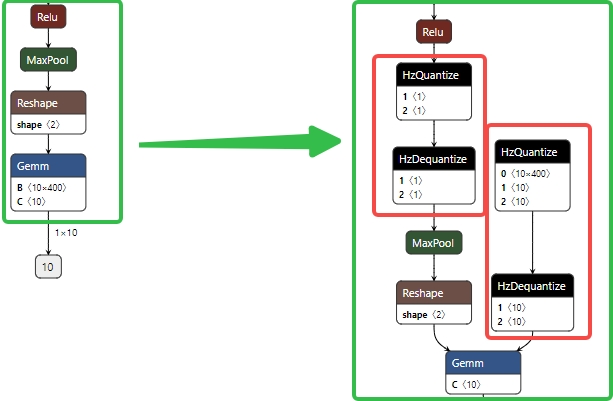

2.伪量化节点是自动插入的,工具链会自动分析哪些节点需要量化。从工具链逻辑看, fuse_model 是在“eager”mode时才会使用的,“fx”mode下会自动融合可以融合的节点。当然量化config都是需要的。

2.伪量化节点是自动插入的,工具链会自动分析哪些节点需要量化。从工具链逻辑看, fuse_model 是在“eager”mode时才会使用的,“fx”mode下会自动融合可以融合的节点。当然量化config都是需要的。