我的模型转onnx是成功的,也验证过了

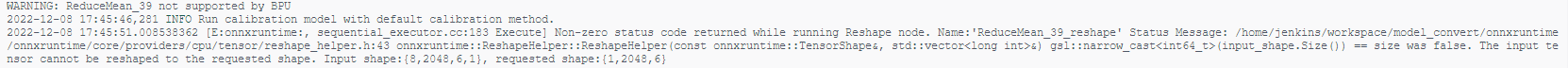

现在onnx转 .bin文件失败

遇到的问题:

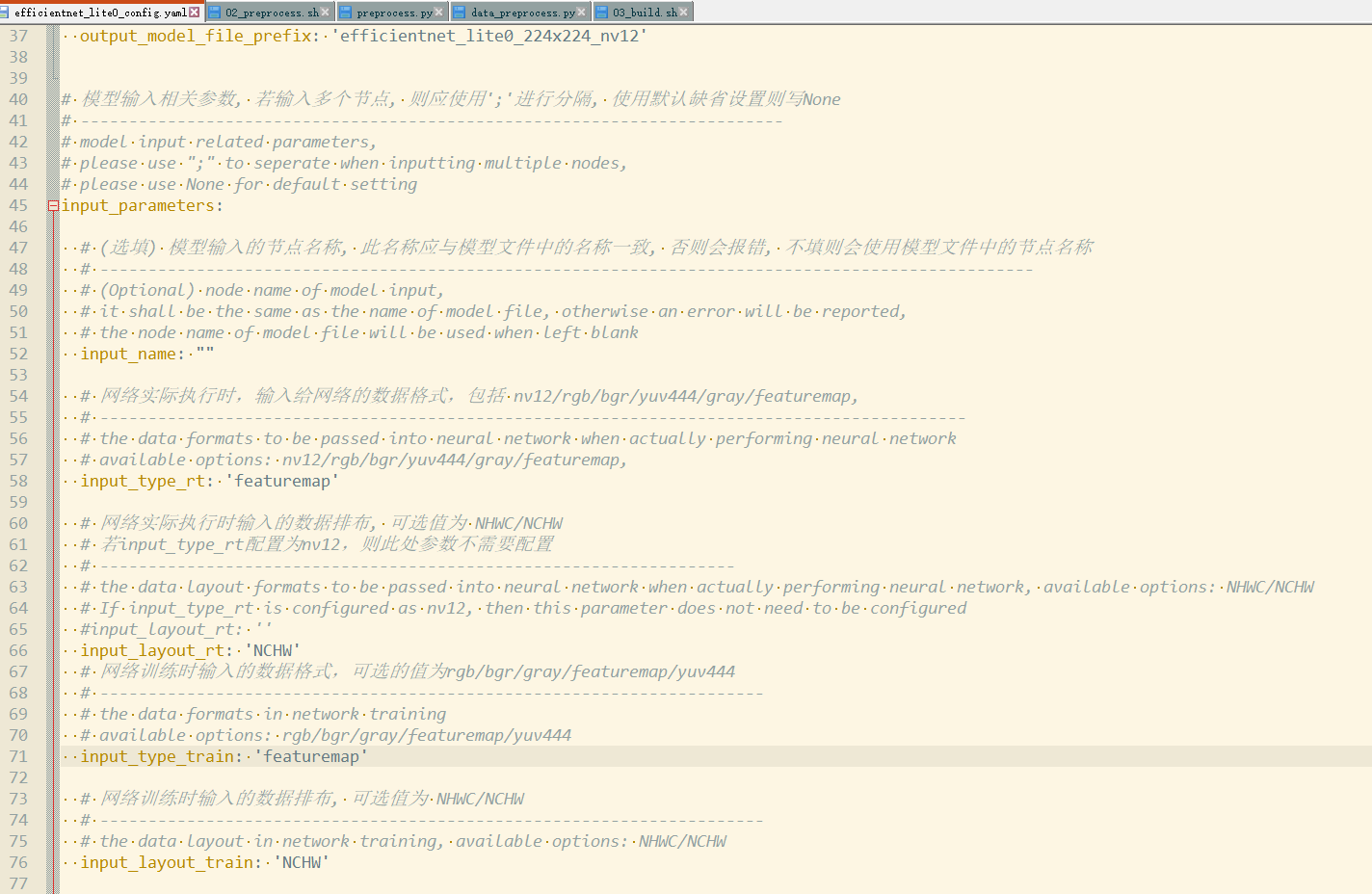

1.我的模型输入是mel频谱图 , 输入是float32 , 值域不在0-255

请问efficientnet_lite0_config.yaml (见附件)应该怎么改?

2.或者,能否不进行量化,直接将onnx转 .bin文件

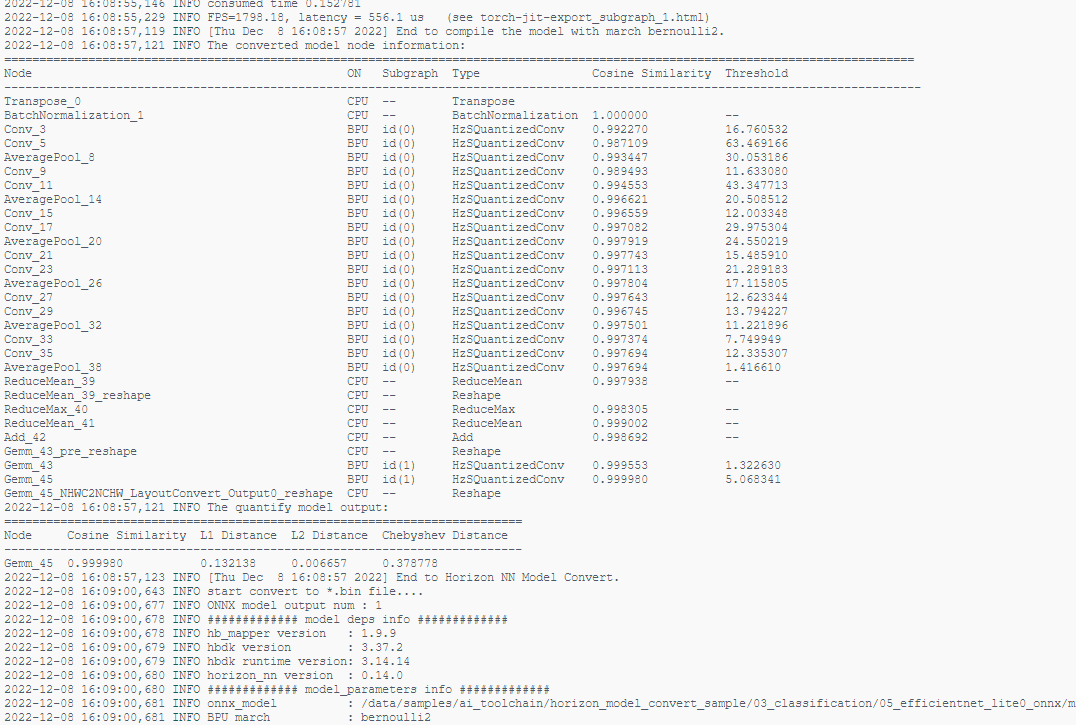

03.build.sh 输出的过程如下, 貌似转成功了,但是输出和onnx 的很不一样,无法使用

022-12-06 14:52:46,872 INFO Reset batch_size=1 and execute calibration again...

2022-12-06 14:58:15,422 INFO Select max method.

2022-12-06 14:58:15,776 INFO [Tue Dec 6 14:58:15 2022] End to calibrate the model.

2022-12-06 14:58:15,777 INFO [Tue Dec 6 14:58:15 2022] Start to quantize the model.

2022-12-06 14:58:37,202 INFO [Tue Dec 6 14:58:37 2022] End to quantize the model.

2022-12-06 14:58:42,803 INFO Saving the quantized model: efficientnet_lite0_224x224_nv12_quantized_model.onnx.

2022-12-06 14:58:52,130 INFO [Tue Dec 6 14:58:52 2022] Start to compile the model with march bernoulli2.

2022-12-06 14:58:56,280 INFO Compile submodel: torch-jit-export_subgraph_0

2022-12-06 14:59:02,053 INFO hbdk-cc parameters:['--core-num', '1', '--fast', '--O3', '--input-layout', 'NHWC', '--output-layout', 'NCHW', '--input-source', 'ddr']

2022-12-06 14:59:02,111 INFO INFO: "-j" or "--jobs" is not specified, launch 16 threads for optimization

[==================================================] 100%

2022-12-06 14:59:06,362 INFO consumed time 4.27701

2022-12-06 14:59:06,838 INFO FPS=32.75, latency = 30534.8 us (see torch-jit-export_subgraph_0.html)

2022-12-06 14:59:06,844 INFO Compile submodel: torch-jit-export_subgraph_1

2022-12-06 14:59:07,695 INFO hbdk-cc parameters:['--core-num', '1', '--fast', '--O3', '--input-layout', 'NHWC', '--output-layout', 'NHWC', '--input-source', 'ddr']

2022-12-06 14:59:07,728 INFO INFO: "-j" or "--jobs" is not specified, launch 16 threads for optimization

[==================================================] 100%

2022-12-06 14:59:07,872 INFO consumed time 0.146057

2022-12-06 14:59:07,952 INFO FPS=1798.18, latency = 556.1 us (see torch-jit-export_subgraph_1.html)

2022-12-06 14:59:09,823 INFO [Tue Dec 6 14:59:09 2022] End to compile the model with march bernoulli2.

2022-12-06 14:59:09,825 INFO The converted model node information:

==================================================================================================================================

Node ON Subgraph Type Cosine Similarity Threshold

-----------------------------------------------------------------------------------------------------------------------------------

Transpose_0 CPU -- Transpose

BatchNormalization_1 CPU -- BatchNormalization 1.000000 --

Conv_3 BPU id(0) HzSQuantizedConv 0.994158 4.039474

Conv_5 BPU id(0) HzSQuantizedConv 0.988520 73.934135

AveragePool_8 BPU id(0) HzSQuantizedConv 0.991906 21.153534

Conv_9 BPU id(0) HzSQuantizedConv 0.983432 8.645608

Conv_11 BPU id(0) HzSQuantizedConv 0.984774 31.801315

AveragePool_14 BPU id(0) HzSQuantizedConv 0.987688 22.935102

Conv_15 BPU id(0) HzSQuantizedConv 0.988757 13.219603

Conv_17 BPU id(0) HzSQuantizedConv 0.986877 15.482847

AveragePool_20 BPU id(0) HzSQuantizedConv 0.987965 17.494753

Conv_21 BPU id(0) HzSQuantizedConv 0.986419 14.433695

Conv_23 BPU id(0) HzSQuantizedConv 0.984016 24.406654

AveragePool_26 BPU id(0) HzSQuantizedConv 0.980193 28.904232

Conv_27 BPU id(0) HzSQuantizedConv 0.982191 26.671112

Conv_29 BPU id(0) HzSQuantizedConv 0.980930 21.382399

AveragePool_32 BPU id(0) HzSQuantizedConv 0.982146 20.134457

Conv_33 BPU id(0) HzSQuantizedConv 0.964356 14.805241

Conv_35 BPU id(0) HzSQuantizedConv 0.980975 58.668331

AveragePool_38 BPU id(0) HzSQuantizedConv 0.980975 0.960493

ReduceMean_39 CPU -- ReduceMean 0.983716 --

ReduceMean_39_reshape CPU -- Reshape

ReduceMax_40 CPU -- ReduceMax 0.989400 --

ReduceMean_41 CPU -- ReduceMean 0.986529 --

Add_42 CPU -- Add 0.989427 --

Gemm_43_pre_reshape CPU -- Reshape

Gemm_43 BPU id(1) HzSQuantizedConv 0.998293 0.857610

Gemm_45 BPU id(1) HzSQuantizedConv 0.999911 5.329660

Gemm_45_NHWC2NCHW_LayoutConvert_Output0_reshape CPU -- Reshape

2022-12-06 14:59:09,825 INFO The quantify model output:

==========================================================================

Node Cosine Similarity L1 Distance L2 Distance Chebyshev Distance

--------------------------------------------------------------------------

Gemm_45 0.999911 0.107928 0.005794 0.441083

2022-12-06 14:59:09,827 INFO [Tue Dec 6 14:59:09 2022] End to Horizon NN Model Convert.

2022-12-06 14:59:12,512 INFO start convert to *.bin file....

2022-12-06 14:59:12,548 INFO ONNX model output num : 1

2022-12-06 14:59:12,549 INFO ############# model deps info #############

2022-12-06 14:59:12,549 INFO hb_mapper version : 1.9.9

2022-12-06 14:59:12,550 INFO hbdk version : 3.37.2

2022-12-06 14:59:12,550 INFO hbdk runtime version: 3.14.14

2022-12-06 14:59:12,551 INFO horizon_nn version : 0.14.0

2022-12-06 14:59:12,551 INFO ############# model_parameters info #############

2022-12-06 14:59:12,551 INFO onnx_model : /data/samples/ai_toolchain/horizon_model_convert_sample/03_classification/05_efficientnet_lite0_onnx/mapper/super_resolution.onnx

2022-12-06 14:59:12,551 INFO BPU march : bernoulli2

2022-12-06 14:59:12,552 INFO layer_out_dump : False

2022-12-06 14:59:12,552 INFO log_level : DEBUG

2022-12-06 14:59:12,552 INFO working dir : /data/samples/ai_toolchain/horizon_model_convert_sample/03_classification/05_efficientnet_lite0_onnx/mapper/model_output

2022-12-06 14:59:12,553 INFO output_model_file_prefix: efficientnet_lite0_224x224_nv12

2022-12-06 14:59:12,553 INFO ############# input_parameters info #############

2022-12-06 14:59:12,553 INFO ------------------------------------------

2022-12-06 14:59:12,554 INFO ---------input info : input ---------

2022-12-06 14:59:12,554 INFO input_name : input

2022-12-06 14:59:12,554 INFO input_type_rt : featuremap

2022-12-06 14:59:12,555 INFO input_space&range : regular

2022-12-06 14:59:12,555 INFO input_layout_rt : NHWC

2022-12-06 14:59:12,555 INFO input_type_train : featuremap

2022-12-06 14:59:12,555 INFO input_layout_train : NCHW

2022-12-06 14:59:12,556 INFO norm_type : no_preprocess

2022-12-06 14:59:12,556 INFO input_shape : 1x1x201x64

2022-12-06 14:59:12,556 INFO cal_data_dir : /data/samples/ai_toolchain/horizon_model_convert_sample/03_classification/05_efficientnet_lite0_onnx/mapper/calibration_data_rgb_f32

2022-12-06 14:59:12,557 INFO ---------input info : input end -------

2022-12-06 14:59:12,557 INFO ------------------------------------------

2022-12-06 14:59:12,557 INFO ############# calibration_parameters info #############

2022-12-06 14:59:12,558 INFO preprocess_on : False

2022-12-06 14:59:12,558 INFO calibration_type: : default

2022-12-06 14:59:12,558 INFO cal_data_type : float32

2022-12-06 14:59:12,559 INFO ############# compiler_parameters info #############

2022-12-06 14:59:12,559 INFO hbdk_pass_through_params: --core-num 1 --fast --O3

2022-12-06 14:59:12,559 INFO input-source : {'input': 'ddr', '_default_value': 'ddr'}

2022-12-06 14:59:12,588 WARNING Except for Quanitze/Dequantize and transpose, all other op's are treated as nchw layout by default!!!

2022-12-06 14:59:12,588 INFO NCHW to NHWC transpose node will be added for input 0 in bin model

2022-12-06 14:59:13,697 INFO Convert to runtime bin file sucessfully!

2022-12-06 14:59:13,698 INFO End Model Convert