grid = self.normgrid(pos, H, W).unsqueeze(-2).to(x.dtype)

x = F.grid_sample(x, grid, mode = self.mode , align_corners = False)

这里我把输入的x输出了,输出大小是

torch.Size([1, 1, 288, 384])

torch.Size([1, 1, 36, 48])

torch.Size([1, 64, 36, 48])

值都正常,但是在运行01_check.sh报错如下

2025-07-21 09:57:27,466 INFO log will be stored in /open_explorer/xfeat/hb_mapper_checker.log

2025-07-21 09:57:27,466 INFO Start hb_mapper…

2025-07-21 09:57:27,466 INFO hbdk version 3.49.15

2025-07-21 09:57:27,466 INFO horizon_nn version 1.1.0

2025-07-21 09:57:27,466 INFO hb_mapper version 1.24.3

2025-07-21 09:57:27,480 INFO Model type: onnx

2025-07-21 09:57:27,480 INFO input names

2025-07-21 09:57:27,480 INFO input shapes {}

2025-07-21 09:57:27,480 INFO Begin model checking…

2025-07-21 09:57:27,491 INFO Start to Horizon NN Model Convert.

2025-07-21 09:57:27,570 INFO Loading horizon_nn debug methods:set()

2025-07-21 09:57:27,571 INFO The activation calibration parameters:

calibration_type: fixed

2025-07-21 09:57:27,571 INFO The specified model compilation architecture: bayes-e.

2025-07-21 09:57:27,571 INFO The specified model compilation optimization parameters: .

2025-07-21 09:57:27,572 INFO Start to prepare the onnx model.

2025-07-21 09:57:27,600 INFO Input ONNX Model Information:

ONNX IR version: 6

Opset version: [‘ai.onnx v11’, ‘horizon v1’]

Producer: pytorch v1.13.0

Domain: None

Version: None

Graph input:

image0: shape=[1, 3, 300, 400], dtype=FLOAT32

image1: shape=[1, 3, 300, 400], dtype=FLOAT32

Graph output:

mkpts0: shape=[‘num_keypoints’, ‘Gathermkpts0_dim_1’], dtype=FLOAT32

mkpts1: shape=[‘num_keypoints’, ‘Gathermkpts1_dim_1’], dtype=FLOAT32

2025-07-21 09:57:27,600 INFO Modify argmax output element type from int32 to int64 to make sure this onnx model will be valid.

2025-07-21 09:57:27,735 INFO End to prepare the onnx model.

2025-07-21 09:57:27,766 INFO Saving model to: ./.hb_check/original_float_model.onnx.

2025-07-21 09:57:27,766 INFO Start to optimize the onnx model.

2025-07-21 09:57:28,828 INFO End to optimize the onnx model.

2025-07-21 09:57:28,853 INFO Saving model to: ./.hb_check/optimized_float_model.onnx.

2025-07-21 09:57:28,853 INFO Start to calibrate the model.

2025-07-21 09:57:28,994 WARNING The input0 of Node(name:/GridSample_1, type:GridSample) does not support data type: int16

2025-07-21 09:57:28,995 WARNING The input0 of Node(name:/interpolator/GridSample, type:GridSample) does not support data type: int16

2025-07-21 09:57:28,995 WARNING The input0 of Node(name:/GridSample_3, type:GridSample) does not support data type: int16

2025-07-21 09:57:28,996 WARNING The input0 of Node(name:/interpolator_1/GridSample, type:GridSample) does not support data type: int16

2025-07-21 09:57:28,996 WARNING The output of Node(name:/net/heatmap_head/heatmap_head.3/Sigmoid) is int16, then requantized to int8

2025-07-21 09:57:28,996 WARNING The output of Node(name:/Div_mul) is int16, then requantized to int8

2025-07-21 09:57:28,997 INFO There are 1 samples in the data set.

2025-07-21 09:57:28,997 INFO Run calibration model with fixed thresholds method.

Layer /GridSample

/GridSample layer, feature size in axis 1 should in range [1, 4096]. But given size 4294967295

/GridSample layer, feature size in axis 1 should in range [1, 4096]. But given size 4294967295

/GridSample_1 layer, feature size in axis 1 should in range [1, 4096]. But given size 4294967295

/GridSample_1 layer, feature size in axis 1 should in range [1, 4096]. But given size 4294967295

2025-07-21 09:57:29,073 WARNING The output of Node(name:/Div_3_mul) is int16, then requantized to int8

2025-07-21 09:57:29,077 ERROR *** ERROR-OCCUR-DURING {horizon_nn.build_onnx} ***, error message: ERROR: The size of specified_perm is different with input dim

The error model has been saved as complement_calibration_node_for_graph_input_pass_fail.onnx

2025-07-21 09:57:29,077 INFO End to calibrate the model.

2025-07-21 09:57:29,078 INFO End to Horizon NN Model Convert.

这个怎么解决?是量化到整形时溢出之类的吗,这个4294967295,值超大了

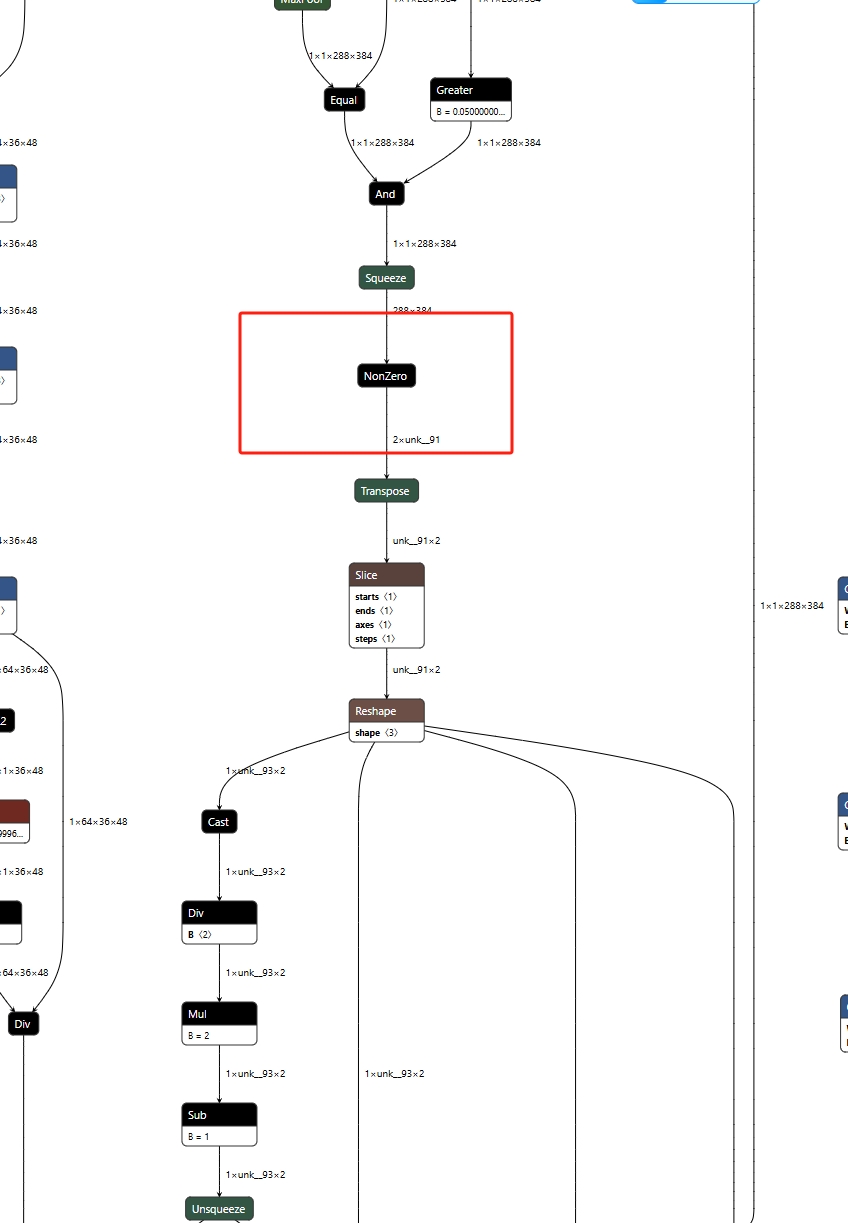

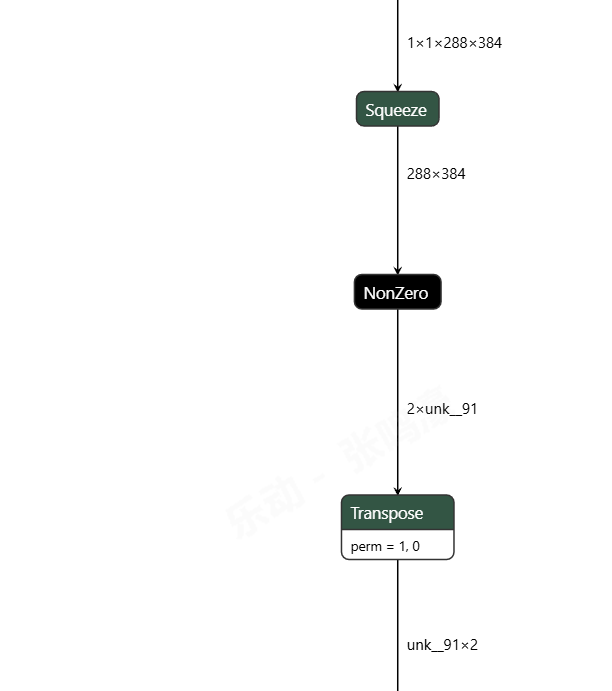

在netron可视化里发现出现了unk,会是这个导致的吗,unk是啥意思,

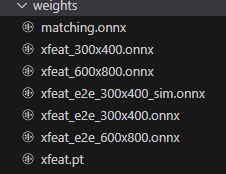

在netron可视化里发现出现了unk,会是这个导致的吗,unk是啥意思, 哪个onnx模型是你生成出来下一步要用的?

哪个onnx模型是你生成出来下一步要用的?